---

# Competition Heats Up Between CRI-O and containerd - Actually, That's Not a Thing...

**URL:** https://crunchtools.com/competition-heats-up-between-cri-o-and-containerd-actually-thats-not-a-thing/

Date: 2018-07-06

Author: fatherlinux

Post Type: post

Summary: Are you looking at CRI-O vs. contianerd and wondering to yourself, which one should I use? If you are…. DON’T – that’s not actually something you should be thinking about. Here’s why…. When it comes to containers there are a ton of APIs in the ecosystem. Different users, community projects and commercial products have madeContinue Reading "Competition Heats Up Between CRI-O and containerd – Actually, That’s Not a Thing…" →

Categories: Articles

Tags: Container Engines, Container Runtime, Kubernetes, Linux

Featured Image: https://crunchtools.com/wp-content/uploads/2018/03/About.png

---

Are you looking at CRI-O vs. contianerd and wondering to yourself, which one should I use? If you are.... DON'T - that's not actually something you should be thinking about. Here's why....

When it comes to containers there are a ton of APIs in the ecosystem. Different users, community projects and commercial products have made architectural decisions to integrate with different APIs depending on technical needs, knowledge/understanding, or ease of integration. This means that users have had to think about all of the different APIs, but consolidation is coming. To demonstrate, let's do a simple walk through how a container gets created in a Kubernetes environment. At a high level, conceptually here is what is happening:

```

Orchestration API -> Container Engine API -> Kernel API

```

Digging one level deeper, here's what it actually looks like today in most production setups:

```

Kubernetes Master -> Kubelet -> Docker Engine -> containerd -> runc -> Linux kernel

```

In OpenShift Online we are moving to this architecture, also in the OpenShift Container Platform product:

```

Kubernetes Master -> Kubelet -> CRI-O -> runc -> Linux kernel

```

In the coming months, theoretically, some Kubernetes deployments could like this, with containerd:

```

Kubernetes Master -> Kubelet -> containerd -> runc -> Linux kernel

```

There are a lot of different possible architectures, but the end user shouldn't have to care. Different tools have been written to integrate at every layer shown above, but the most important layer, and the one where most end users should be focused on is the Kubernetes Master API. This is where they should learn, integrate, and focus investment. This is the layer that will help users move faster, deploy apps better, etc. The container engine is more like wheel bearings in your car. When buying a new car, you want high quality bearings that roll smoothly, but you don't ask the salesperson at the dealership what brand they are. They wouldn't even know. The parts people probably don't know. Even the technicians might not know. This is something that that the engineers and product managers worry about when they are evaluating options in the supply chain. Users can focus on learning to drive the car better, not focus on the brand of bearings.

For the record, I just put new Timken bearing in my 2008 Jeep Grand Cherokee - they are great quality, priced right, and made locally and I live in Akron, OH. But, I digress...

Now if you are a nerd like me, that's not going to satisfy you. That's why I am going to go deeper. I always go deeper. I just happen to be on the team that is helping build CRI-O and evaluate whether to use CRI-O or containerd, so I am going to share the thought process. First and foremost, we believe that CRI-O offers OpenShift customers the most benefit. CRI-O gives OpenShift users the ability to pull and run standard container images based on the [OCI specifications](https://www.opencontainers.org/) (Distribution, Image, and Runtime). It gives users lock-step stability with Kubernetes, by following the Kubernetes versions, essentially taking an engineering integration point off the table. Finally, it provides users great observability through the CRI interface (crictl) and standard open source libraries. These three benefits help customers focus on the higher level Kubernetes API which is what gives them strategic advantage. But, let's go deeper...

The container engine is only responsible for these three main things:

- Provide API/User Interface

- Pulling/Expanding images to disk

- Building a config.json

If you want an even deeper understanding, see: So, [What Does a Container Engine Do Anyway?](http://crunchtools.com/so-what-does-a-container-engine-really-do-anyway/)

Now, when have you ever said to yourself, "you know, I really need to hack the manifest.json before it gets passed to runc?" Hopefully never. These operations are completely transparent to the end user. They should barely ever want or need to hack with image layer and manifest construction. But...when you are providing one of the leading enterprise distributions of Kubernetes (Red Hat OpenShift Container Platform), you absolutely need to think about these things. Also, you have to think about product release schedules, sprint team planning, resource allocation, field enablement (aka technical sales and consulting training), customer education, etc. When you have to do all of these things, you need to choose a container engine that just works, move on and focus on higher level value for customers. I don't think this is rocket science, nor very contentious.

With the advent of the Moby project, containerd adoption just wasn't mature enough in the timeline that was necessary for an enterprise Kubernetes distribution to do planning. Even as I write this, no major Kubernetes distro has shipped containerd. When I say enterprise Kubernetes distro, what I really mean is a solution, not just slapping it together and redistributing. An enterprise distribution has an entire supply chain of training, documentation, support enablement, technical enablement, field architects, performance testing, security testing, default configuration testing, etc. Most people don't realize what it takes to build an enterprise distribution of Linux nor Kubernetes. When you have real customers, you have to check things, check them again, make backwards compatibility decisions, document changes, etc, etc, etc. There is a ton of work involved.

Most people are still using the Docker Engine. In a nutshell, that's why Red Hat is investing in CRI-O. Others, including SUSE are also shipping CRI-O, which we think is a good sign. Furthermore, even with containerd, you still have to figure out how to troubleshoot the lower level portions that aren't covered by CRICTL. Finally, you still have to solve container images builds. Long story short, a lot of hoopla about nothing.

Red Hat is contributing to an ecosystem of tools including CRI-O, Skopeo, Podman, and Buildah. They all leverage the same image and storage libraries. They target a wide range of small use cases similar to the Unix core utils. This will provide customers with more than just running containers, this will help target builds, troubleshooting and anything users can come up with.

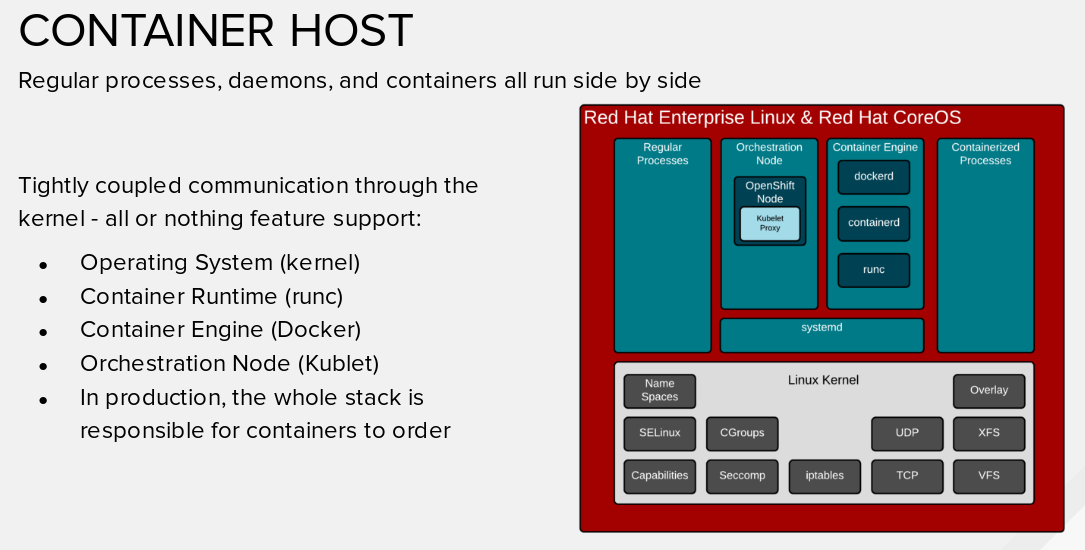

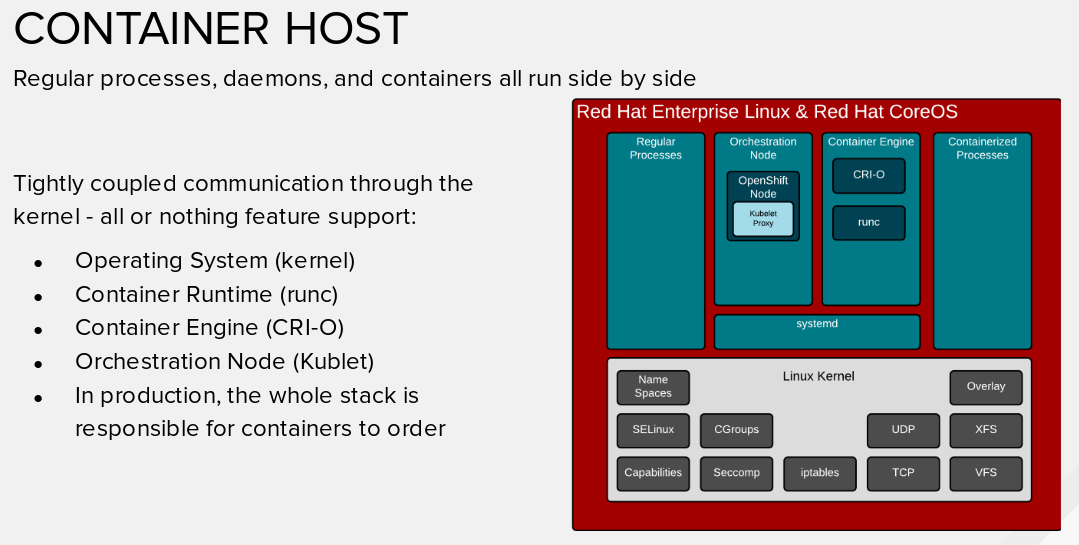

We are watching and evaluating every option, all the time. Right now, we believe CRI-O is the right choice for our customers. This will free customers to worry about the higher level Kubernetes API . That's where the strategic advantage comes from for customers. Then below the container engine, the containers actually run on the Linux kernel which is the bigger piece of the pie from a security, performance, and architectural perspective. The shim in between is just a very small, tactical layer which should only be a place of concern when troubleshooting. To put a pin in this, look at this diagrams below which shows all the components of a container host. Once you look at the big picture, it becomes pretty obvious that you shouldn't worry about the container engine. It's like wheel bearing in your car, as long as somebody else is paying attention and sourcing the right ones, you can focus on driving your Kubernetes cluster - that is, until they become driverless - then you can just worry about interacting with the API ;-)

[](http://crunchtools.com/wp-content/uploads/2018/07/Screenshot-from-2018-07-06-13-37-06.png)

**Docker Engine**

[](http://crunchtools.com/wp-content/uploads/2018/07/Screenshot-from-2018-07-06-13-37-21.png)

**CRI-O Engine**

P.S. For the braver souls that want to go even deeper, check out the [Container Host](https://docs.google.com/presentation/d/1S-JqLQ4jatHwEBRUQRiA5WOuCwpTUnxl2d1qRUoTz5g/edit#slide=id.g3bde95007c_0_7), and [Container Standards](https://docs.google.com/presentation/d/1S-JqLQ4jatHwEBRUQRiA5WOuCwpTUnxl2d1qRUoTz5g/edit#slide=id.g3bcc34e6ea_0_69) section of a presentation I am working on. As always, happy to answer questions below...

---

## Categories

- Articles

---

## Navigation

- [Home](https://crunchtools.com/)

- [Articles](https://crunchtools.com/category/articles/)

- [Events](https://crunchtools.com/category/events/)

- [News](https://crunchtools.com/category/news/)

- [Presentations](https://crunchtools.com/category/presentations/)

- [Software](https://crunchtools.com/software/)

- [Beaver Backup](https://crunchtools.com/software/beaver-backup/)

- [Check BGP Neighbors](https://crunchtools.com/software/check-bgp-neighbors-nagios/)

- [Chev](https://crunchtools.com/software/chev-check-vulnerabilities-script/)

- [Graph BGP Neighbors](https://crunchtools.com/software/grpah-bgp-neighbors/)

- [Graph MySQL Stats](https://crunchtools.com/software/graph-mysql-stats/)

- [Graph Sockets Pipes Files](https://crunchtools.com/software/graph-sockets-pipes-files/)

- [MCP Servers](https://crunchtools.com/software/mcp-servers/)

- [Petit](https://crunchtools.com/software/petit/)

- [Racecar](https://crunchtools.com/software/racecar/)

- [Shiva](https://crunchtools.com/software/shiva/)

- [About](https://crunchtools.com/about/)

- [Home](https://crunchtools.com)

## Tags

- Container Engines

- Container Runtime

- Kubernetes

- Linux