---

# Container Portability: Part 2

**URL:** https://crunchtools.com/container-portability-part-2/

Date: 2017-07-07

Author: fatherlinux

Post Type: post

Summary: Code Portability Today In Container Portability: Part 1: A Brief History in Code Portability, we explored the genesis of code portability and visited structured computer organization to highlight the six commonly found levels in modern computing. Revisiting the six layers – nobody debates the portability of the upper two layers – Application Programmers know that CContinue Reading "Container Portability: Part 2" →

Categories: Articles

Tags: Container Engines, Container Portability, Container Runtime, Linux

Featured Image: https://crunchtools.com/wp-content/uploads/2017/06/Container-Portability-Series-Multi-Level-Computing.png

---

## Code Portability Today

In [Container Portability: Part 1: A Brief History in Code Portability](http://crunchtools.com/container-portability-part-1/), we explored the genesis of code portability and visited [structured computer organization](https://eleccompengineering.files.wordpress.com/2014/07/structured_computer_organization_5th_edition_801_pages_2007_.pdf) to highlight the six commonly found levels in modern computing. Revisiting the six layers - nobody debates the portability of the upper two layers - [Application Programmers](https://en.wikipedia.org/wiki/Application_software) know that C code, assembly, and all of the interpreted languages based on those two is generally portable - if it is retranslated (compiled, assembled) on hardware platform. Nor does anybody debate the importability of the lower three layers - [System Programmers](https://en.wikipedia.org/wiki/System_programming) understand that x86_64 code can generally only be ran on x86_64 processors. People really don't debate this too much.

However, we have all kinds of misunderstandings at level 3 because [operating systems](https://en.wikipedia.org/wiki/Operating_system) are not well not understood even though we use them every single day - even though we have wide ranges of skills with [DevOps](http://www.jedi.be/blog/2010/02/12/what-is-this-devops-thing-anyway/) as a culture and [Full Stack Developers](http://codeup.com/what-is-a-full-stack-developer/). We have forgotten how operating systems work, at their core, because they mostly, just work. But, we need to understand that operating systems extend the level 2 instruction set for convenience - things like loading programs to and from disk, role based access controls, memory management, etc. Operating systems live in this no man's land between hardware and application software. With containers becoming so popular, we really need to invest in understanding the operating system again. It's not just for nerdy systems programmers (old school dudes with giant beards) anymore. Below is a lay of the land that demonstrates how we have a blind spot in how computers work - the old school, full stack developers are missing.

[](http://crunchtools.com/wp-content/uploads/2017/07/Container-Portability-Series-Base-Intermediate-Application-Images-1.png)

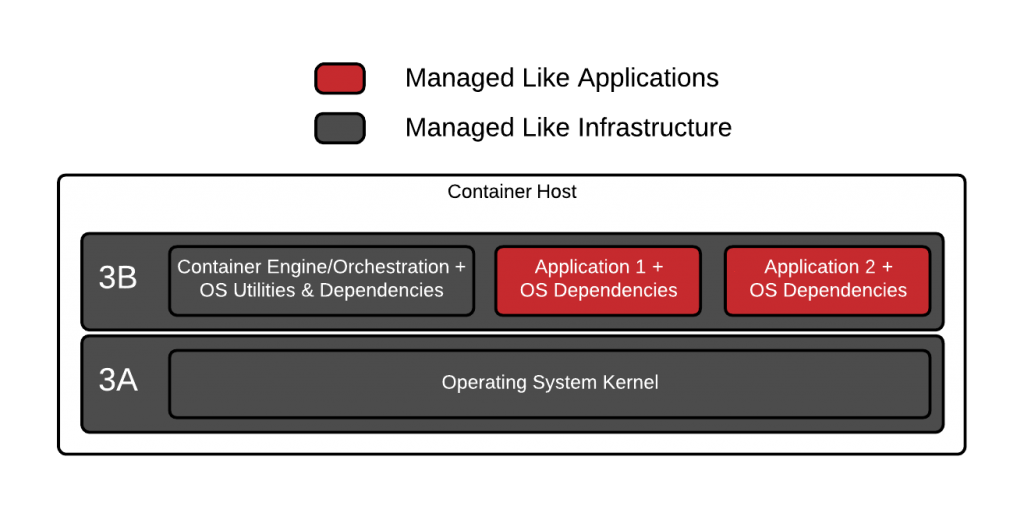

Let's dig deeper and understand why people are so confused. With docker and Linux containers, we are basically breaking level 3 (the operating system) into two parts - let's call them 3A which will represent the [kernel](https://en.wikipedia.org/wiki/Kernel_(operating_system)) and 3B which will represent the [user space](https://en.wikipedia.org/wiki/User_space). The container host has one copy of 3A which is shared between all of the [containers](http://sdtimes.com/guest-view-containers-really-just-fancy-files-fancy-processes/), [daemons](https://en.wikipedia.org/wiki/Daemon_(computing)) and utilities that run on it. This single kernel which runs on the container host, allows the system to boot, enables the hardware, and provides a place for all of the containers to run (process management). The container host and every container image on it, has it's own copy of 3B. These container images can be copies of content from any mix of Fedora, Ubuntu, Debian, Gentoo, Alpine, CentOS, RHEL, or any other Linux distribution. They can even be custom built images that have their own version of Glibc and other libraries built from scratch. There is nothing in the Linux operating system or container tooling that forces users to match the container images with the container host, and this can lead to both spatial and temporal problems.

[](http://crunchtools.com/wp-content/uploads/2017/06/Container-Portability-Series-Level-3A-and-3B-1.png)

Even among Linux distributions built from very similar versions of the kernel, glibc, crypto libraries, etc, there can be incompatibilities in the way they were built or configured (spatial problems). Two Fedora boxes configured completely differently, might not work as expected. For example, I once moved a [container internals lab](https://github.com/fatherlinux/container-internals-lab) I was working on from a container hosts configured with [Device Mapper](https://access.redhat.com/documentation/en-us/red_hat_enterprise_linux_atomic_host/7/html/managing_containers/managing_storage_with_docker_formatted_containers) to ones configured with [Overlay2](https://access.redhat.com/documentation/en-us/red_hat_enterprise_linux_atomic_host/7/html/managing_containers/managing_storage_with_docker_formatted_containers) and all kinds of things broke inside the containers, heck even yum didn't work right. These types of problems are compounded over time (temporal problems). Imagine taking an Ubuntu 17 image and running it on a Fedora 7 container host? Putting aside the fact that Fedora 7 was released long before Docker - would this work?

How do we build a test matrix to record and codify what will and won't work? Now imagine a day in the not too distant future where the versions are Ubuntu 31 and Fedora 57. What combination of versions will work together, who is testing the millions of permutations? Think about performance regressions, security regressions, and just plain architectural problems because implementations in the kernel and user space have changed over time. There is no cross Linux distribution API that defines the entire interface between 3A and 3B - no versions - it was always just assumed that you would build and run Fedora 25 binaries on Fedora 25 and Ubuntu 17 binaries on Ubuntu 17.

The distribution type and version, were "a thing" and that made it easy. Fedora binaries are built with a fedora compiler, with the build flags that make sense in a given version of Fedora. Same with Debian, Ubuntu, and any other distribution of Linux. In fact, hardcore Gentoo users (I must confess that I did this) were so obsessed with compatibility that they went as far as compiling the kernel, the compiler and all of the binaries on the exact hardware that they were using. This was done to eek out every last bit of performance - this was called a [stage 1 installation](https://wiki.gentoo.org/wiki/FAQ#How_do_I_install_Gentoo_using_a_stage1_or_stage2_tarball.3F). Mega compatibility.

Since there is no fully defined, cross distribution API definition between levels 3A and 3B, we rely on the Linux [system call interface](http://cs.lmu.edu/~ray/notes/linuxsyscalls/). The [Linux system call interface](https://en.wikipedia.org/wiki/Linux_kernel_interfaces) is very stable which has tricked us into believing that *this* is enough. That's because a simple web server doesn't need much from the kernel - basically just system calls to open files and open sockets. But, as containers have become more and more popular, people are trying to tackle more and more workloads with them. As containerized workloads expand beyond simple web servers, compatibility becomes more and more of a problem. As I described [here](https://en.wikipedia.org/wiki/Linux_kernel_interfaces), there **can** and **will** be all kinds of changes over time - and with the consumption of more and more specific hardware like [FPGAs on Amazon AWS](https://aws.amazon.com/ec2/instance-types/f1/) or [Solarflare](https://www.solarflare.com/) hardware in [High Performance Computing (HPC)](https://en.wikipedia.org/wiki/Supercomputer) environments as well as architectural changes to different Linux distributions (how many times has /dev and /proc changed?), things will get more difficult.

I would not advocate for the Gentoo Stage 1 level of compatibility, but I would warn people that just because containers make it so easy for to move binaries between distributions doesn't mean you should. People are experimenting, and heck it works most of the time, but it's just that - an experiment. So, what's the solution? Well, there are several different adventures to choose from, which we will explore in [Container Portability: Part 3: The Paths Forward](http://crunchtools.com/container-portability-part-3/).

---

## Categories

- Articles

---

## Navigation

- [Home](https://crunchtools.com/)

- [Articles](https://crunchtools.com/category/articles/)

- [Events](https://crunchtools.com/category/events/)

- [News](https://crunchtools.com/category/news/)

- [Presentations](https://crunchtools.com/category/presentations/)

- [Software](https://crunchtools.com/software/)

- [Beaver Backup](https://crunchtools.com/software/beaver-backup/)

- [Check BGP Neighbors](https://crunchtools.com/software/check-bgp-neighbors-nagios/)

- [Chev](https://crunchtools.com/software/chev-check-vulnerabilities-script/)

- [Graph BGP Neighbors](https://crunchtools.com/software/grpah-bgp-neighbors/)

- [Graph MySQL Stats](https://crunchtools.com/software/graph-mysql-stats/)

- [Graph Sockets Pipes Files](https://crunchtools.com/software/graph-sockets-pipes-files/)

- [MCP Servers](https://crunchtools.com/software/mcp-servers/)

- [Petit](https://crunchtools.com/software/petit/)

- [Racecar](https://crunchtools.com/software/racecar/)

- [Shiva](https://crunchtools.com/software/shiva/)

- [About](https://crunchtools.com/about/)

- [Home](https://crunchtools.com)

## Tags

- Container Engines

- Container Portability

- Container Runtime

- Linux